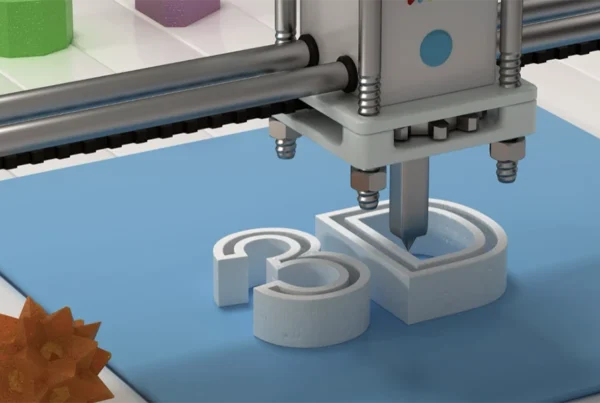

The global cloud computing market is valued at $1.04 trillion in 2026 and is projected to reach $2.65 trillion by 2031, a compound annual growth rate of 20.65%, according to Mordor Intelligence’s January 2026 forecast. Enterprise AI adoption has moved alongside it: McKinsey’s November 2025 State of AI report, surveying 1,993 participants across 105 nations, found that 88% of organizations now use AI in at least one business function, though only 23% have scaled agentic AI systems and fewer than a third report scaling AI enterprise-wide. That volume has created a quieter problem. When everyone claims innovation, someone has to decide which claims actually hold up.

Chandra Sekhar Kondaveeti has made that sorting process part of his judging practice. Across 2025 and 2026, he has served as a Globee Awards judge across the Artificial Intelligence, Cybersecurity, and Technology categories, evaluating enterprise nominations in the three areas where vendor claims move fastest and independent verification is hardest.

Enterprise Complexity Has Outrun Informal Review

Enterprise software sprawl is now measurable. The average enterprise runs around 1,200 unofficial applications outside formal IT visibility, according to Kiteworks research cited in IBM’s 2025 Cost of a Data Breach Report, and 86% of organizations say they cannot track how data flows through AI systems inside their own perimeter. Cloud consolidation has continued at the same time, with large enterprises holding 53.12% of 2025 cloud spend according to Mordor Intelligence, and more than 60% of enterprise workloads already migrated to cloud environments per industry tallies.

Across his 2025 and 2026 jury assignments, Kondaveeti has read that complexity directly. The submissions crossing his desk argue, year after year, that enterprise platforms now span too many tools, too many vendors, and too many parallel AI pilots for any single internal team to audit. The evaluators with the harder job are the ones being asked to judge the result: the few reviewers who can examine a nomination, test its claims against independent evidence, and decline to reward the packaging when the underlying architecture does not hold up.

“A submission’s real quality only becomes visible when someone who is not the author has to stress-test it,” says Chandra Sekhar Kondaveeti. “That is why expert evaluation matters more now than it did ten years ago. The volume of claims arriving at a jury’s desk has grown faster than the number of evaluators who can credibly read them.”

The Cybersecurity Case for Expert Evaluation

The global average cost of a data breach fell to $4.44 million in 2025, according to IBM’s annual Cost of a Data Breach Report, the first decline in five years. The United States moved in the other direction, reaching a record $10.22 million average, and healthcare breaches averaged $7.42 million for the fifteenth year running. Shadow AI alone added $670,000 to the average incident, 63% of breached organizations lacked any AI governance policy at all, and 97% of AI-related breaches occurred at companies without proper access controls.

That gap is exactly what cybersecurity juries now spend their time trying to surface. Kondaveeti’s judging across the cybersecurity category has concentrated on the area where claims and evidence most often diverge, separating submissions whose authors can demonstrate the controls they describe from those whose narrative outpaces their telemetry. The detection-containment gap closes only when the people evaluating a program understand not just what a control is supposed to do, but what each failure mode will look like once the traffic is real.

“Most breaches are not exotic,” Kondaveeti notes. “They are the result of governance that did not keep up with deployment speed. The number that matters is how quickly your own team finds the problem before someone else does.”

The Credentialing Layer

Before any technology claim reaches a procurement conversation, it usually passes through a layer of professional peer review that sorts credible work from the rest. Academic and industry publishing depend on a review corps that is already overstretched: roughly 200 peer-reviewed engineering journals run by IEEE alone, more than 1,700 conference proceedings each year from the same body, and approximately 30% of global literature in electrical, electronics, and computer engineering passing through its review infrastructure. The bottleneck is reviewer capacity. Editorial boards and program committees are the choke point.

Kondaveeti sits inside that credentialing layer as a program juror. In 2025, he served on the program jury for the International Conference on Data Science and Applications (ICDSA 2025) in Jaipur, where his reviewing spanned submissions across AI systems, enterprise platforms, and applied machine learning. Positions of this kind carry a working load rather than a ceremonial one. Each submission returned with a meaningful decision requires a reviewer who can read the claim, test the evidence, and articulate what separates a sound contribution from a plausible one.

“Sitting on a program jury is not about the letterhead,” Kondaveeti reflects. “It is about the obligation that comes with the seat. When you agree to evaluate someone else’s research, you are staking your own professional judgment on whether the work is sound. That should not be delegated to anyone unfamiliar with what it takes to read evidence carefully.”

The AI Question Raises the Stakes

McKinsey’s 2025 State of AI report found that while 88% of organizations now use AI, most remain in what the report calls “pilot purgatory,” with only 31% reporting enterprise-wide scaling and fewer than 10% of vertical use cases reaching production. IBM’s parallel research indicates that organizations using AI and security automation extensively shorten breach lifecycles by an average of 80 days and save nearly $1.9 million per incident compared with organizations that use neither. At the same time, 1 in 6 breaches now involves attackers using AI, most commonly for phishing at 37% and deepfake impersonation at 35%. The asymmetry is becoming the operative problem for the field.

Kondaveeti’s recent judgment has sat directly on that line. The AI submissions crossing his desk over the last twelve months have tilted toward governance, compliance, and access control questions rather than model novelty or benchmark theater. The consistent pattern across the entries worth accepting is that generative systems do not reduce the need for expert review. They multiply it, because the volume of claims now produced each quarter scales faster than any single awards program or conference committee can process on its own.

“Automation can do a great deal, and it should,” Kondaveeti concludes. “But somebody still has to decide what good looks like, and then hold the line on it. That judgment is not something a model can make for you. It will remain a human call for a long time, and the people doing it well will continue to be the ones who have spent real hours reading evidence, case after case.”

Inside the Peer Review Pipeline

The volume moving through academic peer review has grown sharply. NeurIPS 2025, one of the larger venues in machine learning, received 21,575 main-track submissions, more than double its 2024 figure and roughly 2.3 times the 2020 count, and assembled a review corps of 20,518 reviewers, 1,663 area chairs, and 199 senior area chairs to process them. Acceptance held near 24.5%, consistent with prior years. Smaller specialist venues run on similarly tight margins. CISCom 2025, for instance, desk-rejected more than 70% of its 283 submissions before the substantive review stage even began. The bottleneck in the system is not submissions. It is the supply of qualified reviewers.

Kondaveeti has spent 2025 and the opening months of 2026 inside that pipeline. He contributes paper reviews through the Precision Conference Solutions platform used by ACM and allied venues, where his review assignments have concentrated on AI systems, enterprise platforms, and applied machine learning. That reviewing sits alongside his 2025 and 2026 jury appointments across industry awards and academic conferences, forming a consistent slate of evaluation roles in a period when the volume of submissions has outpaced the supply of qualified reviewers.

“Most of what crosses a reviewer’s desk is competent,” Kondaveeti observes. “What separates the few papers worth accepting is rarely the headline claim. It is the evidence. People who can show their telemetry, their failure modes, and the trade-offs they accept tend to do better than people with slicker narratives.”